by Julián Méndez

Abstract:

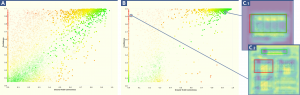

We present ConCorDia, a visualization tool for post hoc analysis of the evolution of a convolutional neural network (CNN)

during the training process. Understanding and evaluating CNNs for object classification in images can be supported by “visual

explanations” like heatmaps created from the model to localize and highlight regions of interest in the images that lead to the

decisions of the system. ConCorDia was designed and implemented with experts to support the exploration and detailed analysis

of the huge amount of resulting visual representations in order to assess the coherence of the training. We describe the value

that can be gained from usage scenarios of ConCorDia towards explainable artificial intelligence.

Note:

The technical report and repository are part of an internal database, the source code and/or documentation can be requested to the members of the IMLD.